In addition to the data generated by sensors in the facility and the machines on an assembly line, the company also collects marketing, sales, logistics, and financial data (often using a SaaS tool).Īll of that data must be extracted, transformed, and loaded into a new destination for analysis. ETL tools make it possible to transform vast quantities of data into actionable business intelligence.Ĭonsider the amount of raw data available to a manufacturer. This architecture allows smaller, less expensive data warehouses to maintain and manage business intelligence.ĭata strategies are more complex than they’ve ever been SaaS gives companies access to data from more data sources than ever before. Incremental loading compares incoming data with what’s already on hand, and only produces additional records if new and unique information is found.

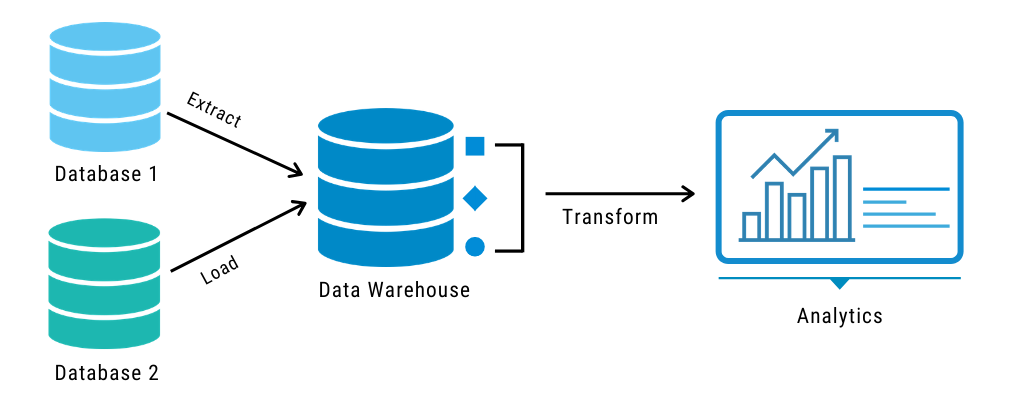

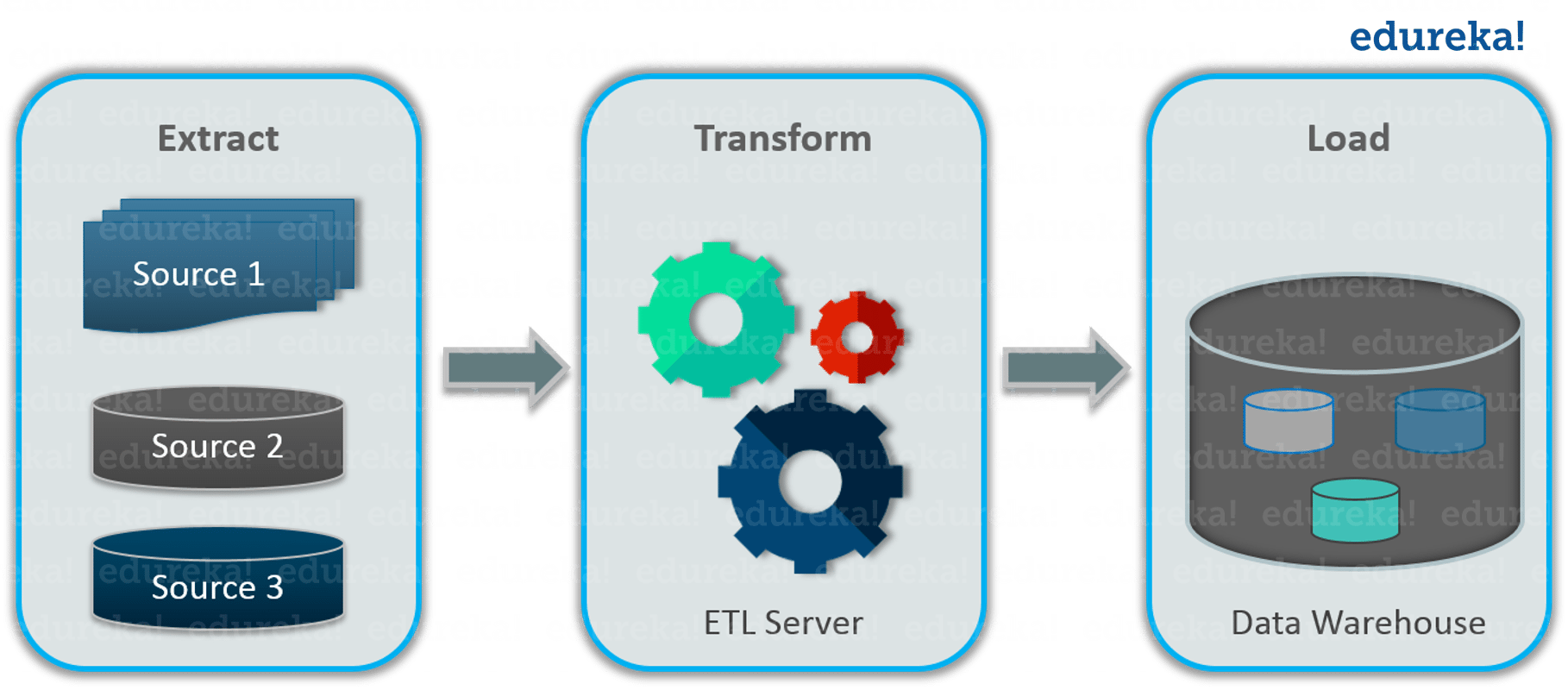

Incremental loading - A less comprehensive but more manageable approach is incremental loading. Though there may be times this is useful for research purposes, full loading produces datasets that grow exponentially and can quickly become difficult to maintain. The final step in the ETL process is to load the newly transformed data into a new destination (data lake or data warehouse.) Data can be loaded all at once (full load) or at scheduled intervals (incremental load).įull loading - In an ETL full loading scenario, everything that comes from the transformation assembly line goes into new, unique records in the data warehouse or data repository. ETL tools automate the extraction process and create a more efficient and reliable workflow. Cloud, hybrid, and on-premises environmentsĪlthough it can be done manually with a team of data engineers, hand-coded data extraction can be time-intensive and prone to errors.Volumes of data can be extracted from a wide range of data sources, including: In this first step of the ETL process, structured and unstructured data is imported and consolidated into a single repository. To execute such a complex data strategy, the data must be able to travel freely between systems and apps.īefore data can be moved to a new destination, it must first be extracted from its source - such as a data warehouse or data lake.

Most businesses manage data from a variety of data sources and use a number of data analysis tools to produce business intelligence. The ETL process is comprised of 3 steps that enable data integration from source to destination: data extraction, data transformation, and data loading. We are also seeing the process of Reverse ETL become more common, where cleaned and transformed data is sent from the data warehouse back into the business application. As a result, the ETL process plays a critical role in producing business intelligence and executing broader data management strategies. ETL tools also make it possible for different types of data to work together.Ī typical ETL process collects and refines different types of data, then delivers the data to a data lake or data warehouse such as Redshift, Azure, or BigQuery.ĮTL tools also makes it possible to migrate data between a variety of sources, destinations, and analysis tools. TRANSFORM data by deduplicating it, combining it, and ensuring quality, to thenĮTL tools enable data integration strategies by allowing companies to gather data from multiple data sources and consolidate it into a single, centralized location.In the world of data warehousing, if you need to bring data from multiple different data sources into one, centralized database, you must first: Quick answer? ETL stands for " Extract, Transform, and Load." Data Wrangling: Speeding Up Data Preparation.Best practices for managing data quality: ETL vs ELT.Data Extraction Tools: Improving Data Warehouse Performance.What is Reverse ETL? Meaning and Use Cases.Stitch Fully-managed data pipeline for analytics.Talend Data Fabric The unified platform for reliable, accessible data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed